Memory usage is another column on the task list that can help you understand what is happening under the hood of your computer. In my last blog, I wrote about CPU usage. Memory is similar to CPU in that it is a critical resource that affects computer performance and it can help evaluate malware on your system.

The Role of Memory

Without memory, often called RAM, your computer has Alzheimer’s. It may have the fastest processor in the world and the coolest programs, but it won’t do anything unless it can keep track of where it is at. The processor pulls an instruction from memory, executes it, and puts the result back into memory to use later. Without memory, a processor doesn’t know what to do next or what it has already done; it is nearly useless.

Memory vs Storage

Memory has to be as fast as the processor or the processor has to wait for data and instructions to be fetched from memory and results to be stored in memory for later use. Using present technology, the fastest memory is volatile. By volatile, I don’t mean memory is liable to fly off the handle and jet to Maui without provocation. Instead, data stored in volatile memory flys to Maui, as far as I know, when the electricity is switched off. In any case, it disappears.

Speed and volatility make memory different from storage. Data that stays around between computing sessions resides in storage, which is useful, but not when speed is the main consideration. Usually storage is on a hard disk. Hard disks are much slower than memory chips, but they store more data at less expense and they are not volatile. In other words, powering down does not affect data stored on a disk.

As processors get faster, memory must also get faster and speed is expensive. This makes memory a scarce and expensive commodity on computers. A laptop with 4 gigabytes of memory and a terabyte of storage has 400 times more storage than memory. At today’s prices, 1 gigabyte of memory costs about the same as 200 gigabytes of storage. Speed costs.

Performance and Memory

Memory is precious, but it performs. When developers have to make a process run faster, one way is to change the code to use memory instead of disk storage. If the developers go overboard and use more memory than the system has available, their optimization backfires. When the system starts to run out of memory, it moves data from memory to slower disk storage and the system begins to bog down as the processor waits for the slow moving data. The same thing happens when several heavy memory consuming processes run at the same time.

Memory Hogging

There are many reasons for heavy memory consumption. One I already mentioned— a process has been designed to consume more memory in order to perform well. Processes running above their designed capacity can also use extra memory. For example, a process designed to support ten simultaneous users might use much more memory if it is supporting a hundred users. Sometimes excess memory usage comes from defective code. A “memory leak” is a classic defect that causes processes to consume more and more memory the longer the process stays running.

Whatever the reason, when memory consumption reaches beyond the optimal level for your computing device, performance will slooooow. The cursor may get jerky. The keyboard will seem to hang, then spit out a clump of characters. When you attempt to start something new, there is a long pause. Nothing works right. Not pleasant. Not pleasant at all.

Memory Shortage Diagnostics

The task list is the first tool I use to determine if I have a memory shortage and what is causing it.

On Windows 10, a convenient way to get to the task list is to right click on the Windows icon in the lower left-hand corner of the screen. The task list will be below the line not too far from the center of the menu. Click on it.

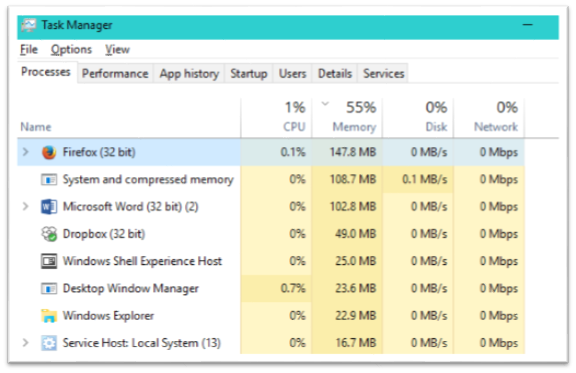

You will get something like this.

In this snapshot, 55% of available fast memory is in use. That is a good number. When the percentage gets above 60%, into the 70s and 80s, your system will begin to suffer. Here, I’ve clicked on the memory column header to sort the processes by memory usage. In this case, I had Firefox up when I took this screen shot and it is the biggest memory consumer. Firefox uses a lot of memory so popping up a new screen is snappy. Therefore, I don’t mind that it is a big consumer. If one of the heavy hitters was an application that I was not using, I would shut it down to free up memory for a performance boost.

Memory Hogging Malware

If a memory-hogging process happens to be malware, it’s bad. You seldom know what the malware is doing. It could be generating spam or sending large quantities of messages to a server that the hacker is trying to overwhelm. It could, perish the thought, be encrypting your files, preparing to demand ransom for their return. Hogging memory is not the only way malware can slow your computer, but it is one way.

As I mentioned in my previous blog, I Google a process name if I am not familiar with it. Usually it is a Windows internal process I don’t know about, but sometimes it will show up as malware.

Emergency Measures

Now we get into some risky stuff that could force you to restore your system, but could also avoid restoring the system. You will have to decide for yourself how much risk you are willing to take, and own the results.

Removing the executable file of the malware can stop the malware’s damage. If you want to remove the file from the system, right click on the process name in the task list, the click on “open file location.” From there, you can delete the executable, but you should think about that before jumping in.

It is always better to remove an application through “Uninstall or change a program” in the Control Panel if you can. Removal is often more complicated that removing a single file. Sometimes configuration files and registries have to be modified and several files deleted. The uninstall in Control Panel is supposed to clean up everything, and, unless the author of the uninstall was sloppy, it always does.

For malware, there usually is no uninstall. If an anti-virus tool detects malware, it will do a better job of uninstalling than you can do manually. So try an anti-virus scan of the malware executable file. If scan finds and eradicates the malware, you win!

Manual Kill

However, if the scan fails and there is no uninstall, I delete any malware files I can find. Deleting the wrong file by mistake will not harm your hardware, but it could require reinstalling your operating system and restoring from a backup. (Highly unpleasant.) However, in my opinion, if your system is already damaged by malware, deleting will probably do no more damage than has already been done and may stop the damage. Therefore, when all else fails, I usually choose to delete immediately to limit the damage. This is a risk I am willing to take, but it is a risk.

If the malware is clever (bad!) it may regenerate the file you deleted. Also, deleting a file out from under a running process may not kill the process, so you will have to hit the end task button to kill it.

Manual Kill Checklist

- Verify that the process is malware

- Run a virus scan on the file and let the anti-virus take care of it

- Check “Uninstall or change a program” in the Control Panel on the off chance you can uninstall it there

- If all else fails, try killing it with the “End Task” button and deleting the file

Good luck! You could save the day for yourself. Or ruin it. I’ve seen it both ways.